SPEC SFS®2014_vda Result

Copyright © 2016-2019 Standard Performance Evaluation Corporation

|

SPEC SFS®2014_vda ResultCopyright © 2016-2019 Standard Performance Evaluation Corporation |

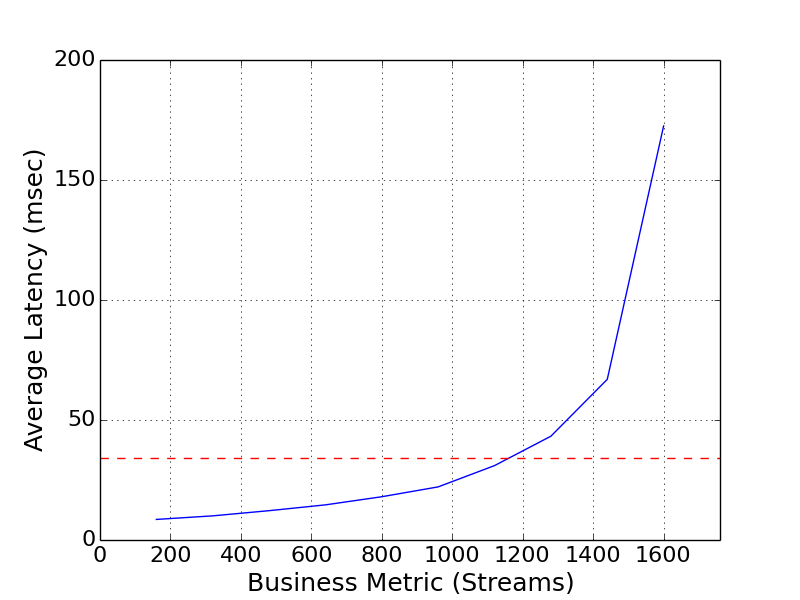

| IBM Corporation | SPEC SFS2014_vda = 1600 Streams |

|---|---|

| IBM Spectrum Scale 4.2 with Elastic Storage Server GL6 | Overall Response Time = 33.98 msec |

|

|

| IBM Spectrum Scale 4.2 with Elastic Storage Server GL6 | |

|---|---|

| Tested by | IBM Corporation | Hardware Available | December 2014 | Software Available | May 2016 | Date Tested | April 2016 | License Number | 11 | Licensee Locations | Almaden, CA USA |

IBM Spectrum Scale helps solve the challenge of explosive growth of

unstructured data against a flat IT budget.

Spectrum Scale provides

unified file and object software-defined storage for high performance, large

scale workloads on-premises or in the cloud. Built upon IBM's award winning

General Parallel Filesystem (GPFS), Spectrum Scale includes the protocols,

services and performance required by many industries, Technical Computing, Big

Data, HDFS and business critical content repositories. IBM Spectrum Scale

provides world-class storage management with extreme scalability, flash

accelerated performance, and automatic policy-based storage tiering from flash

through disk to tape, reducing storage costs up to 90% while improving security

and management efficiency in cloud, big data & analytics environments.

IBM Elastic Storage Server is an optimized disk storage solution bundled with

IBM hardware and innovative IBM Spectrum Scale RAID technology that can perform

fast background disk rebuilds in minutes with no impact to application

performance. This solution also ensures data integrity from the application

down to the storage solution with end to end checksum and provides unsurpassed

end-to-end data availability, reliability and integrity with the data efficient

advanced erasure coding.

| Item No | Qty | Type | Vendor | Model/Name | Description |

|---|---|---|---|---|---|

| 1 | 8 | Spectrum Scale Client | IBM | X3650-M4 | Spectrum Scale client nodes. |

| 2 | 1 | Elastic Storage Server GL6 | IBM | 5146-GL6 | The ESS-GL6 contains 8247-22L IBM Elastic Storage Server nodes and 6 DCS3700E storage expansion drawers. The storage expansion drawers were populated with a total of 348 2TB 7200 RPM NLSAS drives. The ESS also included 3 optional two-port (feature code #EL3D) FDR InfiniBand adapters per server node. |

| 3 | 2 | InfiniBand Switch | Mellanox | SX6036 | 36-port non-blocking managed 56 Gbps InfiniBand/VPI SDN switch. |

| 4 | 1 | Ethernet Switch | SMC Networks | SMC8150L2 | 50-port 10/100/1000 Gbps Ethernet switch. |

| 5 | 8 | InfiniBand Adapter | Mellanox | MCX456A-F | 2-port PCI FDR InfiniBand adapter used in the client nodes. |

| Item No | Component | Type | Name and Version | Description |

|---|---|---|---|---|

| 1 | Client Nodes | Spectrum Scale File System | 4.2.0.3 | The Spectrum Scale File System is a distributed file system that runs on both the Elastic Storage Server nodes and client nodes to form a cluster. The cluster allows for the creation and management of single namespace file systems. |

| 2 | Client Nodes | Operating System | Red Hat Enterprise Linux 7.2 for x86_64 | The operating system on the client nodes was 64-bit Red Hat Enterprise Linux version 7.2. |

| 3 | Elastic Storage Server | Storage Server | 4.0 | The ESS version 4.0 provides all of the necessary software to be compatible with Spectrum Scale version 4.2.0.3 running on the client nodes. |

| Spectrum Scale Client Nodes | Parameter Name | Value | Description |

|---|---|---|

| verbsPorts | mlx5_0/1/1 mlx5_1/1/2 | InfiniBand device names and port numbers. |

| verbsRdma | enable | Enables InfiniBand RDMA transfers between Spectrum Scale client nodes and Elastic Storage Server nodes. |

| verbsRdmaSend | 1 | Enables the use of InfiniBand RDMA for most Spectrum Scale daemon-to-daemon communication. |

| Hyper-Threading | disabled | Disables the use of two threads per core in the CPU. The setting was changed in the BIOS menus of the client nodes. |

The first three configuration parameters were set using the "mmchconfig" command on one of the nodes in the cluster. The verbs settings in the table above allow for efficient use of the InfiniBand infrastructure. The settings determine when data are transferred over IP and when they are transferred using the verbs protocol. The InfiniBand traffic went through two switches, item 3 in the Bill of Materials. The last parameter disabled Hyper-Threading on the client nodes.

| Spectrum Scale Client Nodes | Parameter Name | Value | Description |

|---|---|---|

| maxFilesToCache | 11M | Specifies the number of inodes to cache for recently used files that have been closed. |

| maxMBpS | 10000 | Specifies an estimate of how many megabytes of data can be transferred per second into or out of a single node. |

| maxStatCache | 0 | Specifies the number of inodes to keep in the stat cache. |

| pagepool | 32G | Specifies the size of the cache on each node. |

| pagepoolMaxPhysMemPct | 90 | Percentage of physical memory that can be assigned to the page pool |

| workerThreads | 1024 | Controls the maximum number of concurrent file operations at any one instant, as well as the degree of concurrency for flushing dirty data and metadata in the background and for prefetching data and metadata. | Elastic Storage Server | Parameter Name | Value | Description |

| nsdRAIDTracks | 1M | Specifies the number of tracks in the Spectrum Scale Native RAID buffer pool |

The configuration parameters were set using the "mmchconfig" command on one of the nodes in the cluster. Both the client nodes and the ESS used mostly default tuning parameters. The parameters listed in the table above reflect values that might be used in a typical streaming environment.

There were no opaque services in use.

| Item No | Description | Data Protection | Stable Storage | Qty |

|---|---|---|---|---|

| 1 | 348 2 TB NLSAS drives in the ESS. | Spectrum Scale Native RAID declustered arrays | Yes | 1 |

| 2 | 300 GB 10K mirrored HDD pair in Spectrum Scale client nodes used to store the OS. | RAID-1 | No | 8 |

| 3 | 600 GB 10K SAS mirrored HDD pair in ESS nodes used to store the OS. | RAID-1 | No | 2 |

| Number of Filesystems | 1 | Total Capacity | 128 TiB | Filesystem Type | Spectrum Scale File System |

|---|

A single Spectrum Scale file system was created with a 8 MiB block size for

data, a 1 MiB block size for metadata, 4 KiB inode size, and a 128 MiB log

size. The file system was spread across all of the Network Shared Disks (NSDs)

defined by the ESS. Each client node and ESS node mounted the file system. A

policy was applied to the file system that places data and metadata on separate

pools as defined by the NSD configuration.

The client nodes each had an

ext4 file system that hosted the operating system.

The ESS was configured with two declustered arrays each containing 174 2TB

NLSAS drives. The arrays were configured to tolerate the failure of any 2

drives or any single DCS3700E enclosure.

Two NSDs were created using 2

64 TiB 8+2P vdisks, 1 per declustered array, and were designated to hold

Spectrum Scale file system data. Two additional NSDs were created using 2 500

GiB 3-way replicated vdisks, 1 per declustered array, and were designated to

hold Spectrum Scale file system metadata. Each vdisk was created within a

declustered array and the blocks of the vdisk were spread across all the

available physical disks in the array.

The cluster used a two-tier

architecture. The client nodes perform file-level operations. The data requests

are transmitted to the ESS nodes. The ESS nodes perform the block-level

operations. In Spectrum Scale terminology the load generators are NSD clients

and the ESS nodes are NSD servers. The NSDs were the storage devices specified

when creating the Spectrum Scale file system.

| Item No | Transport Type | Number of Ports Used | Notes |

|---|---|---|---|

| 1 | 1 GbE cluster network | 10 | Each node connects to a 1 GbE administration network with MTU=1500 |

| 2 | FDR InfiniBand cluster network | 28 | Client nodes have 2 FDR links, and each ESS node has 6 FDR links to a shared FDR IB cluster network |

The 1 GbE network was used for administrative purposes. All benchmark traffic flowed through the Mellanox SX6036 InfiniBand switch. Each client node had two active InfiniBand ports. Both the 1 GbE port and the first InfiniBand port were used by Spectrum Scale for inter-node communication. Each client node InfiniBand port was on a separate FDR fabric for RDMA connections between nodes.

| Item No | Switch Name | Switch Type | Total Port Count | Used Port Count | Notes |

|---|---|---|---|---|---|

| 1 | SMC 8150L2 | 10/100/1000 Gbps Ethernet | 50 | 10 | The default configuration was used on the switch. |

| 2 | Mellanox SX6036 #1 | FDR InfiniBand | 36 | 14 | The default configuration was used on the switch. |

| 3 | Mellanox SX6036 #2 | FDR InfiniBand | 36 | 14 | The default configuration was used on the switch. |

| Item No | Qty | Type | Location | Description | Processing Function |

|---|---|---|---|---|---|

| 1 | 16 | CPU | Spectrum Scale client nodes | Intel(R) Xeon(R) CPU E5-2650 v2 @ 2.60GHz 8-core | Spectrum Scale client, load generator, device drivers |

| 2 | 4 | CPU | Elastic Storage Server | IBM POWER8(R) 10-Core 3.42 GHz | Spectrum Scale NSD server, Spectrum Scale Native RAID, device drivers |

Each of the Spectrum Scale client nodes had 2 physical processors. Each processor had 8 cores with one thread per core. Each ESS node had 2 physical processors. Each processor had 10 cores with SMT2 enabled by default.

| Description | Size in GiB | Number of Instances | Nonvolatile | Total GiB |

|---|---|---|---|---|

| Spectrum Scale client node system memory | 128 | 8 | V | 1024 |

| ESS node system memory | 232 | 2 | V | 464 |

| ESS node integrated NVRAM module | 4 | 2 | NV | 8 | Grand Total Memory Gibibytes | 1496 |

In the client nodes Spectrum Scale reserves a portion of the physical memory

for file data and metadata caching. A portion of the memory is also reserved

for buffers used for node to node communication.

In the ESS nodes

Spectrum Scale reserves a portion of the physical memory for caching block

data. The integrated NVRAM module is used to store Fast Write data and some

block-level log data.

The ESS nodes each have an NVRAM that is used to temporarily store some of the modified data before being written to the backend disk. Modified data designated as "fast writes" are stored initially in the NVRAM, while standard modified data go directly to the backend disk. The data in the NVRAM is mirrored between the nodes. In the case of a single node failure, the write data and any destaging to backend disk is handled by the still active node. In the case of a general power outage a capacitor on the PCI card holds enough charge to keep the card powered long enough for the NVRAM data to be destaged to a stable flash medium on the card. All of the modified writes in the benchmark are handled by the ESS.

The solution under test was a Spectrum Scale cluster optimized for streaming environments. The NSD client nodes were also the load generators for the benchmark. The benchmark was executed from one of the client nodes. All of the Spectrum Scale nodes were connected to a 1 GbE switch and two FDR InfiniBand switches. The Elastic Storage Server consisted of the NSD server nodes and 348 NLSAS drives in 6 disk expansion drawers attached to the nodes via 6 Gbps SAS connections. Each ESS node had a SAS connection to each DCS3700E disk storage enclosure. Each ESS node also included a PCI attached NVRAM card. The data in each NVRAM card was mirrored between the ESS nodes, which communicated with each other over the InfiniBand network.

Data protection and integrity features of the ESS were enabled during the benchmark execution. These features include disk scrubbing, NSD checksums and version numbers, double disk failure tolerance, and single storage enclosure fault tolerance.

The 8 Spectrum Scale client nodes were the load generators for the benchmark. Each load generator had access to the single namespace Spectrum Scale file system. The benchmark accessed a single mount point on each load generator. In turn each of mount points corresponded to a single shared base directory in the file system. The NSD clients process the file operations, and the data requests to and from disk were serviced by the Elastic Storage Server.

IBM, IBM Spectrum Scale, IBM Elastic Storage, Power, and POWER8 are trademarks

of International Business Machines Corp., registered in many jurisdictions

worldwide.

Intel and Xeon are trademarks of the Intel Corporation in

the U.S. and/or other countries.

Mellanox is a registered trademark of

Mellanox Ltd.

None

Generated on Wed Mar 13 16:53:23 2019 by SpecReport

Copyright © 2016-2019 Standard Performance Evaluation Corporation